Over the last 100 years, the world population has essentially quadrupled to eight billion people and is projected to increase to 10 billion sometime between 2055 and 2060 (United Nations, 2022). This growth in world population will accentuate energy demands and force a continuing and evolving subsurface evaluation of the earth. This subsurface evaluation will require exploration and development of oil and gas; carbon capture and storage; geothermal energy; and any other process where the interpretation of geology is paramount.

Addressing these needs is not going to be easy. A conflicting issue is that over the last decade, the geoscience workforce has decreased for multiple reasons: fluctuating hydrocarbon prices contributing to poor retention rates at oil companies; a considerable drop in geoscience majors in universities; and a staffing transition referred to as “The Great Crew Change,” where the large population of the baby-boomer generation is retiring and leaving industry. The only way to compensate for this workforce gap and meet the world’s future energy demands requires companies to take advantage of technological advancements, such as artificial intelligence/machine learning and computing power.

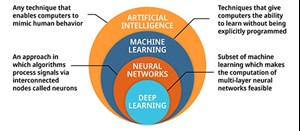

Fig. 1. Explanation of the symbiotic relationship between deep learning, neural networks, machine learning and artificial intelligence with benefits and capabilities.

Role of AI/ML. Artificial intelligence (AI) is any technique that enables computers to mimic human behavior, whereas machine learning (ML), a component of AI, refers to techniques that give computers the ability to learn without being explicitly programmed, Fig. 1. The advancements in AI/ML are directly related to the increase in computer power. The future of geoscience technology will be driven by computer power and associated AI/ML algorithms. In Michio Kaku’s 1997 book (Visions), he predicted that Moore’s Law, which surmises that computer power doubles roughly every 18 months, would end sometime after 2020. However, we are arguably reaching the limit to etch ever-smaller transistors onto silicon wafers because, at the molecular level, quantum effects prevail, and Newtonian mechanics no longer apply.

Quantum computing. Many experts indicate that within a decade, a new form of computing, called quantum computers, will be fundamentally operational. Quantum computing is based on the laws of quantum mechanics, where subatomic levels of physical matter exhibit properties of both particles and waves. Hardware for quantum computers leverages this behavior. Classical processors use bits (0 or 1). Quantum computers employ qubits (0, 1, or a superposition of both). It is estimated that 500 qubits can represent the same information as 2 to the five hundredth power of normal bits. This enables the computation of multidimensional quantum algorithms (especially AI/ML). It is estimated that quantum computers will be many orders of magnitude faster than conventional computers.

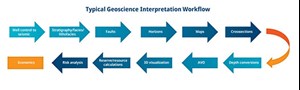

Fig. 2. Traditional sequential approach to geoscience interpretations of the subsurface.

Applications to geoscience interpretation workflows. Today, most geoscience interpretations of the subsurface are done in a serial fashion, where the interpretation processes are performed essentially one at a time. Figure 2 outlines a typical interpretation workflow used by the industry to explore for hydrocarbons. Since the late 1980s, seismic interpretation has evolved from 2D to 3D to 4D data (3D surveys through time), coupled with important advances in seismic data acquisition and processing, and the use of workstations, which has enabled consistent evaluations and more accuracy in the interpretation process.

However, there have been no dramatic step changes in the interpretation process itself, with the exception of machine learning in the last few years. Machine learning has enabled interpreters to handle very large amounts of data simultaneously, determine relationships of numerous (>3) types of data, discover nonlinearities not known from theory, improve efficiency and accuracy over time, and provide for the automation of interpretation processes.

Some of these machine learning approaches—including deep learning (convolutional neural networks), self-organizing maps, and semi-supervised methods—have been applied to solve many geological challenges. A few of the more successful applications of machine learning include fault/fracture delineation; defining stratigraphic facies distributions; lithofacies classification; well log correlation and prediction; and identifying seismic direct hydrocarbon indicators (DHIs).

Today, the necessary computer power and associated architecture for machine learning are basically centered around CPU and GPU configurations, high-performance computing, and the cloud. The potential of employing quantum computers to solve machine learning processes produces visions of streamlined automation, combining numerous different types of machine learning and applications of digital twinning.

For example, many, if not all, of the interpretation workflow steps in Fig. 2 could be automated, with the geoscientist providing minimal direction and quality control of the process. It is possible that quantum computing could help identify and combine many different types of machine learning to provide more accurate answers or even find geological elements undiscovered in our scientific data.

The use of synthetic data sets and digital twins with quantum computing could produce simulations of extremely complex systems, incorporating components that could never be applied presently. Even quantum simulations for prospects and drilling portfolios can perform risk analysis more accurately and optimize investment decisions beyond anything possible today. It is a well-known fact today that rock property solutions derived from seismic reflection data are not unique. However, the application of quantum computing may enable the calibration and corroboration of seismic data with ground-truth data from well boreholes to limit the risk of non-uniqueness.

Applications to seismic processing. One of the largest requirements for computer power today is related to 3D prestack depth migration seismic processing for the oil and gas industry. Seismic processing companies typically employ some of the largest supercomputers in the world. Even with extensive computing facilities, including cloud computing, the processing of enormous amounts of data with the latest algorithms can be limiting. For example, to image beneath the salt in the Gulf of Mexico requires the application of Full Waveform Inversion (FWI) and the most advanced wave equation migration algorithms, such as Least Squares Reverse Time Migration (LSRTM). FWI was created to develop a velocity model of the earth’s subsurface that fully explains the seismic wavefields recorded in the seismic data. (Monk, 2020).

However, most seismic processing today utilizes only the reflection component of the recorded data. Due to the iterative nature of FWI and the computational requirements for accurate simulation, FWI is highly compute-intensive and therefore limited by today’s compute environment. LSRTM is an inversion-based migration algorithm that minimizes the data misfit between observed seismic recordings and forward-modeled synthetic data. (Wang et al., 2021). In most cases, LSRTM requires a very accurate velocity model and has a high computational cost.

The common limitation to applying these advanced processing approaches is obviously computer power. With the development of quantum computers and associated new processing algorithms, the limitation of computer power will be eased. Since advanced processing algorithms like FWI and LSRTM require iterations with models, quantum computers will be able to get to an optimum solution in a fraction of the time.

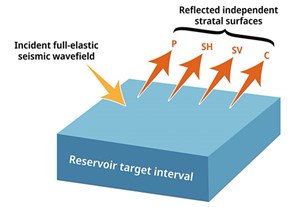

Fig. 3. The use of quantum computing will enable geophysicists to use full elastic wavefield data in seismic processing, seismic imaging, which includes all the waves generated by a source.

The use of quantum computing will even enable geophysicists to accomplish the “holy grail” of seismic processing, seismic imaging using full elastic wavefield data (Fig. 3) that include all the waves generated by a source, such as primary reflections, transmitted waves, converted modes and their multiples. (Huang et al., 2021). The fundamental assumption of employing full elastic wavefield data is that the various modes of the elastic wavefield can provide unique rock, fluid and sequence information across stratigraphic intervals. (Hardage et al., 2011).

Using these new modeling techniques, we can finally image the earth as an anisotropic elastic solid. If quantum computing enables these types of advances in seismic processing, then seismic acquisition and design must evolve also, such as routine application of long offset Ocean Bottom Nodes. It will be necessary to collect required low- and high-frequency ranges, full-azimuth multi-component data, appropriate sampling and advances in acquisition equipment to truly accomplish what quantum computing can provide in seismic processing advancements. AI/ML technology, coupled with quantum computing, is a unique, exciting combination that will allow analysis and recognition of seismic reflection patterns across various sedimentary basins. Moreover, companies will have the capacity to fully integrate their case histories of successes and failures. Optimum decision analysis, overseen by strong geoscientists and savvy managers, will draw within reach.

Applications to carbon capture and storage. Geological storage involves injecting liquid CO2 into deep porous rocks and then monitoring that rock system to ensure that the injected CO2 remains in a liquid state in the rock interval where it is placed. Quantum computing should be invaluable in geological CO2 storage. The key factor that causes quantum computing to be critically important in CO2 storage is the calendar-time extent of collecting, processing and interpreting the data required for rigorous CO2 monitoring.

The important concept that must be kept in mind regarding the monitoring of sequestered CO2 is “CO2 storage must be permanent.” This permanent requirement means that the monitoring of sequestered CO2 at each worldwide CO2 storage site must span many millennia of calendar time. The volume of data that accumulates in monitoring each CO2 storage site will thus build to magnitudes that are immense and beyond what geoscientists have ever experienced. Quantum computing will, therefore, be essential for managing and utilizing CO2-storage activities.

Both P and S seismic reflection data are needed to monitor a CO2 storage reservoir. P-wave fading above a storage reservoir detects CO2 escaping in gaseous form. S-wave data need to be segregated into fast-S and slow-S images, because slow-S data are the first type of seismic data to detect emerging fractures that are harbingers of impending structural failure of a reservoir.

VSP data need to be acquired in addition to 3D3C surface-receiver data. 3C data will provide both P-SV and SV-P modes, which allows two versions of fast-S and slow-S data to be utilized. Versions of SV-P and P-SV images should be created, which will require that five seismic images be depth-registered (P-P, fast-S via P-SV, fast-S via SV-P, slow-S via P-SV, slow-S via SV-P).

The digital size of seismic databases involved in monitoring sequestered CO2 at only one storage site will quickly go beyond what geophysicists have ever used. Each reservoir database also must be expanded to include all well-log data and all core data that are acquired across a reservoir area, and all data that are recorded by stress sensors deployed at numerous downhole locations. The size of a database assembled at only one CO2 site over several millennia will grow to a size that database managers have never encountered to date.

The importance of new computer strategies, such as quantum computing, will be mandatory. This is true, if a CO2 reservoir manager in generation 10 needs to reach back and include all previous 3D seismic data volumes acquired by generations 1 to 9, to be included in an updated analysis of reservoir conditions that describes the chronological behavior of reservoir stability.

Challenges ahead. While the potential applications of quantum computing for subsurface evaluations are promising, there are still many challenges that need to be addressed. One of the biggest is the development of quantum algorithms that can effectively solve the problems faced in interpreting geology. The development of these algorithms requires expertise in both quantum computing and geophysics, making it a complex and challenging task. Additionally, the current state of quantum hardware is still in its infancy, with limited qubit counts and high error rates. That makes it difficult to apply quantum computing to large-scale problems, such as seismic analysis.

Conclusions. Despite challenges, there have been some extremely promising developments in the field of quantum computing and seismic analysis. In 2019, researchers at IBM developed a quantum algorithm for FWI that was able to solve a simplified version of the problem, using a quantum computer. While the algorithm was not able to outperform classical algorithms on the same problem, it demonstrated the potential of quantum computing in seismic analysis. Similarly, researchers at the University of Texas at Austin have developed a quantum-inspired algorithm for RTM that has shown promising results on synthetic data.

At this time, we can only speculate how practical advantages will be utilized to fully model seismic wave motions in the earth, how seismic patterns that reveal geology will be better appreciated, and how the decision process will improve to get better answers quicker.

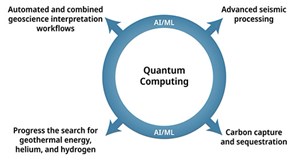

Fig. 4. The increased analytical power of quantum computing has vast potential to fulfill future requirements for exploring and developing hydrocarbons, CCS and the search for geothermal, helium and hydrogen.

Dramatic increases in computer power are necessary to fulfill future requirements for exploring and developing oil and gas resources, carbon capture and storage, and the search for geothermal energy, helium, and hydrogen, Fig. 4. Taking advantage of quantum mechanical effects, quantum computing can process millions of calculations concurrently. It is this quantum phenomenon that occurs at the sub-atomic level that provides encouragement that quantum computers will take geologic subsurface interpretation beyond anything we can imagine today and will be a major contributor to meeting the world’s energy needs.

REFERENCES

- Hardage, B. A., M. M. Backus, K. Fouad, R. J. Graebner, J. A. Kane and D. C. Sava, “Elastic-wavefield seismic stratigraphy: A new seismic imaging technology, Technical Progress Report, May 6, 2004, DOE Cooperative Agreement No. DE-FC26-03NT15396.

- Hardage, B. A., M. V. DeAngelo, P. E. Murray, and D. Sava, 2011, Multicomponent Seismic Technology: SEG Geophysical References Series No. 18.

- Huang, R., Z. Zhang, Z. Wu, Z. Wei, J. Mei, and P. Wang, 2021, Full-waveform inversion for full-wavefield imaging: Decades in the making: The Leading Edge, 40, No. 5.

- Kaku, M., 1997, Visions: Anchor Books.

- Monk, D. J., 2020, Survey design and seismic acquisition for land, marine, and in-between in light of new technology and techniques: SEG Distinguished Instructor Series, No. 23.

- Wang, B., Y. He, J. Mao, F. Liu, F. Hao, Y Huang, M. Perz, and S. Michell, 2021, Inversion-based imaging: from LSRTM to FWI imaging: First Break, 39, No. 12.

About the Authors

Rocky Roden

Geophysical Insights

Rocky Roden has 45 years of industry experience working as a geophysicist, exploration/development manager, director of applied technology and chief geophysicist. He is currently senior advisor with Geophysical Insights, developing machine learning advances for geoscience interpretation, and is a principal in the Rose and Associates DHI Risk Analysis Consortium, which has involved over 85 oil companies around the world since 2001, developing a seismic amplitude risk analysis program and worldwide prospect database. He was previously with Texaco, Pogo Producing, Maxus Energy, YPF Maxus and Repsol, where he retired as chief geophysicist in 2001. Mr. Roden has authored or co-authored over 40 technical publications on various aspects of seismic interpretation, AVO analysis, amplitude risk assessment and geoscience machine learning. He also served as chairman of The Leading Edge editorial board.

Dr. Thomas Smith

Geophysical Insights

Dr. Thomas Smith received BS and MS degrees in geology from Iowa State University and a PhD in geophysics from the University of Houston. His graduate research focused on a shallow refraction investigation of the Manson astrobleme. In 1971, he joined Chevron as a processing geophysicist but resigned in 1980 to complete his doctoral studies in 3D modeling and migration at the Seismic Acoustics Laboratory of the University of Houston. After graduating with a PhD in geophysics in 1981, he started a geophysical consulting practice and taught seminars in seismic interpretation, seismic acquisition and seismic processing. Dr. Smith founded Seismic Micro-Technology (SMT) in 1984 to develop PC software to support training workshops he was holding. This subsequently led to development of the KINGDOM Software Suite for integrated geoscience interpretation. In 2008, he founded Geophysical Insights, where he leads a team of geophysicists, geologists and computer scientists in developing machine learning and deep learning to technologies addressing fundamental geophysical problems. The company launched the Paradise AI workbench in 2013, which uses machine learning, deep learning and pattern recognition technologies to extract greater information from seismic and well data. Dr. Smith has been a member of the SEG since 1967 and is a professional member of SEG, GSH, HGS, EAGE, SIPES, AAPG, Sigma XI, SSA and AGU.

Michael Dunn

Geophysical Insights

Michael Dunn is an exploration executive with extensive global experience, including the Gulf of Mexico, Central America, Australia, China and North Africa. Mr. Dunn has a proven track record of successfully executing exploration strategies built on a foundation of new and innovative technologies. He serves as Senior Vice President, Business Development, for Geophysical Insights. He joined Shell in 1979 as an exploration geophysicist and party chief and held increasing levels of responsibility, including manager of interpretation research. In 1997, he participated in the launch of Geokinetics, which completed an IPO on the AMEX in 2007. His extensive experience with oil companies, including Shell and Woodside, in addition to time in the service sector with Geokinetics and Halliburton, has provided him with a unique perspective on technology and applications in oil and gas. Mr. Dunn received a BS degree in geology from Rutgers University and an MS degree in geophysics from University of Chicago.

Dr. Bob Hardage

Consultant

Dr. Bob Hardage is an industry consultant. He has 52 years of research and management activities at Phillips Petroleum, WesternAtlas and the Bureau of Economic Geology. He has been a member of the Society of Exploration Geophysicists for 56 years. SEG awarded Dr. Hardage a Special Commendation, Life Membership and Honorary Membership for his contributions to geophysics. He wrote the monthly Geophysical Corner column for AAPG’s Explorer magazine for six years and was honored by AAPG with a Distinguished Service Award for promoting geophysics among the geological community. He received a PhD in physics from Oklahoma State University.

Related Articles

FROM THE ARCHIVE

Wanda Parisien is a computing expert who navigates the vast landscape of hardware and software. With a focus on computer technology, software development, and industry trends, Wanda delivers informative content, tutorials, and analyses to keep readers updated on the latest in the world of computing.